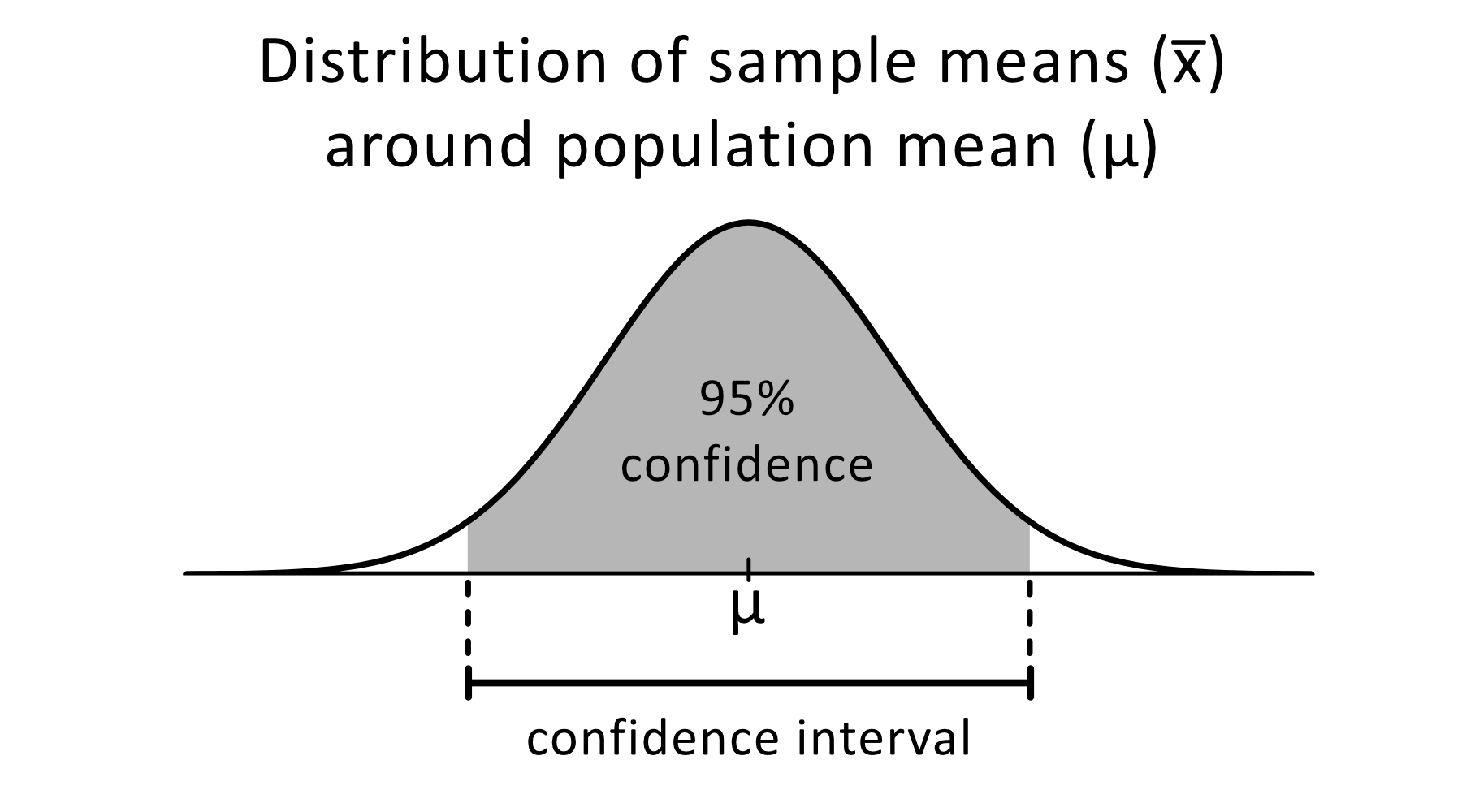

It is a common convention to use a 95% confidence interval in practice, but how do we interpret it? First, let’s assume we have access to the population. Here, we can use the confidence interval to quantify the uncertainty of that estimate. However, most of the time, the estimated and actual values are not exactly the same. Our goal is to estimate a population parameter with this statistic for example, we could estimate the population mean using the sample mean. Imagine that we have a statistic like a sample mean that we calculated from a sample drawn from an unknown population. The actual parameter value is either insider or outside these bounds. In a nutshell, what is a confidence interval anyway? A confidence interval is a method that computes an upper and a lower bound around an estimated value. Bonus: Creating Confidence Intervals with TorchMetrics.Confidence Intervals and the True Model Performance.

Comparing the Different Confidence Interval Methods.Method 4: Confidence Intervals from Retraining Models with Different Random Seeds.Method 3: Bootstrapping the Test Set Predictions.Method 2.4: Taking the Reweighting One Step Further: The.Method 2.3: Reweighting the Boostrap Samples via the.Method 2.2: Bootstrap Confidence Intervals Using the Percentile Method.Method 2.1: A t Confidence Interval from Bootstrap Samples.A Note About Replacing Independent Test Sets with Bootstrapping.Method 2: Bootstrapping Training Sets – Setup Step.Method 1: Normal Approximation Interval Based on a Test Set.Defining a Dataset and Model for Hands-On Examples.But since this article is about confidence intervals, let’s define what they are and how we can construct them. However, It is also helpful to include the average performance over different dataset splits or random seeds with the variance or standard deviation – I sometimes adopt this simpler approach as it is more straightforward to explain. Confidence intervals are one way to do that. Lastly, it’s worth highlighting that the big picture is to measure and report uncertainty. This article is purposefully short to focus on the technical execution without getting bogged down in details there are many links to all the relevant conceptual explanations throughout this article. Note that these methods also apply to deep learning. This article outlines different methods for creating confidence intervals for machine learning models. However, many articles still omit any form of uncertainty estimates, and, moving forward, I hope we can increase the adoption as it is usually just a small thing to add. In my experience as a reviewer, I have seen many research articles that adopted this suggested minimal standard by including uncertainty estimates. Confidence intervals are no silver bullet, but at the very least, they can offer an additional glimpse into the uncertainty of the reported accuracy and performance of a model.Ĭonfidence intervals around accuracy measurements can greatly enhance the communication of research results as well as impact the reviewing process. However, when evaluating machine learning models, we typically have to work around many constraints, including limited data, independence violations, and sampling biases. Developing good predictive models hinges upon accurate performance evaluation and comparisons.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed